********** EUROPE **********

return to top

At least 150 people missing after boat capsizes off coast of Mauritania

Wed, 24 Jul 2024 19:01:47 GMT

Boat full of people hoping to get to Europe overturns and at least 15 known to have died, UN migration agency says

At least 15 people have died and more than 150 are missing after a boat full of people hoping to make it to Europe capsized off the coast of Mauritania, according to the International Organization for Migration (IOM).

About 300 people had boarded the long, wooden, fishing vessel in The Gambia, roughly 850 miles (1,350km) to the south, spending seven days at sea before the boat overturned on Monday, the agency said in a statement.

Continue reading...Rico Krieger admits role in Ukrainian plot and pleads for German chancellor to save him during broadcast

A German man sentenced to death in Belarus has appeared on state television in the country, in tears and begging the German government to intervene in his case.

“Mr Scholz, please, I am still alive … it is not yet too late,” said Rico Krieger, who was pictured handcuffed inside a cell, appealing to the German chancellor, Olaf Scholz.

Continue reading...Arsonists used crude methods but disruption to opening of the Olympic Games in Paris was severe

It was about 1.15am when the SNCF maintenance workers, carrying out repairs by moonlight, spotted the group of people a little further down the railway line near a signal box outside the sleepy village of Vergigny, in the northern French department of Yonne.

They were concerned enough by the unlikely sight at such an hour to approach the intruders, and then to make a call to the local police as those they had interrupted ran off into the dark.

Continue reading...An armada of boats carrying athletes along the Seine, dangling dancers and parading drag queens – all under torrential rain

The Paris Olympic Games opened on Friday night with a high-kitsch, riverside spectacle, as an armada of boats carried athletes along the Seine, dancers dangled from high poles, drag queens paraded on bridges and the Olympic rings lit up the Eiffel Tower – all under unrelenting, torrential rain.

France had promised its opening ceremony would be the biggest open-air show on Earth. More than 300,000 people watched from the riverside and bridges – and hundreds more stood at windows and balconies – as a show of dance, live music and acrobatics unfolded along more than 6km of river from the Pont d’Austerlitz to the Eiffel Tower.

Continue reading...Vyacheslav Zinchenko, 18, remanded in custody for 60 days after Iryna Farion shot in Lviv on 19 July

A Ukrainian court has remanded an 18-year-old man in custody over the murder of a nationalist former lawmaker, state media reported.

Iryna Farion – a divisive hardline campaigner against the use of Russian language – was shot near her flat in the western city of Lviv on 19 July.

Continue reading...Police searched houses across country on eve of Olympic opening ceremony in neighbouring France

Three suspected members of Islamic State’s Afghan branch, Islamic State Khorasan, have been charged in Belgium with planning a terrorist attack.

Police released four other people who had also been detained during searches of houses across the country on Thursday, three of them after being questioned by an investigative judge, the state prosecutor’s office said.

Continue reading...  | submitted by /u/signed7 [link] [comments] |

Hundreds of staff at the fashion chain reportedly told they will lose their jobs

Ted Baker could disappear from British high streets as the struggling fashion chain plans to shut all its stores within weeks.

The business behind the fashion brand’s UK shops, No Ordinary Designer Label Limited (NODL), entered administration in March.

Continue reading...Irish singer’s brother speaks of shock at ‘hideous’ figure which ‘looked nothing like her’

Dublin’s wax museum is withdrawing a figure of Sinéad O’Connor amid criticism from her family and members of the public that it looked “nothing like her”.

Many reacted with shock when the waxwork figure was unveiled on Thursday.

Continue reading...Märtha Louise is not allowed to use her title commercially after renouncing her royal duties two years ago

A letter has claimed that the Norwegian princess Märtha Louise was more deeply involved with a gin launched to mark her forthcoming wedding than previously stated, amid growing questions over the use of her name on the bottle.

The royal, who will marry the American businessman Durek Verrett in a four-day fjord-side wedding in Geiranger, Norway, next month, is not permitted to use her princess title in commercial contexts.

Continue reading...Prosecutors open formal investigation after coordinated attacks cause severe disruption on France’s busiest lines

Prosecutors have opened a criminal investigation after saboteurs attacked France’s high-speed railway network in a series of “malicious acts” that brought chaos to the country’s busiest rail lines hours before the Olympic opening ceremony.

The state-owned railway operator, SNCF, said arsonists targeted installations along high-speed TGV lines connecting Paris with the country’s west, north and east, and traffic would be severely disrupted across the country into the weekend.

Continue reading...The Guardian’s picture editors select photographs from around the world

Continue reading...Reimagined Y2K-era micro-jacket is ideal for erratic weather and Olivia Rodrigo to Bella Hadid are early adopters

Emily in Paris, the hit Netflix show that follows the American expat Emily Cooper as she navigates the French capital, is known for outraging Parisians with its cliches about berets, their rudeness and fondness for long lunches. However, it was millennials this week who were left horrified after the release of a trailer for its highly anticipated fourth season. In the sneak peek, its protagonist, Emily, is seen wearing a bright pink bolero, reminiscent of the tiny shrugs that dominated wardrobes circa 2000.

“Been there, done that, no need to revisit,” reads one comment on social media. “Hideous,” reads another. One user simply wrote “NO”.

Continue reading...European visitor rushed to hospital after briefly walking barefoot in California national park amid extreme heat

A European visitor got third-degree burns on his feet while briefly walking barefoot on the sand dunes in California’s Death Valley national park over the weekend, park rangers said Thursday.

The rangers said the visitor was rushed to a hospital in nearby Nevada. Because of language issues, the rangers said they were not immediately able to determine whether the 42-year-old Belgian’s flip-flops had somehow been broken or were lost at Mesquite Flat Sand Dunes during a short Saturday walk.

Continue reading...He may have been one of Britain’s most successful ever athletes, but Christie’s triumphs opened him up to abuse from the press, the police – and sexual harassment

‘I am so proud of being British,” says Linford Christie. Watching this painful hour and a half-long portrait of one of Britain’s most accomplished yet controversial athletes, it’s hard to figure out why. England’s footballers may be enduring 58 years of hurt, but Christie runs them a close second.

When Christie sensationally won gold in the 100m final of the 1986 European Championships in Stuttgart (“I must have been ranked about 15th”), he celebrated on track by draping the union jack over his shoulders – only to be ticked off. Now 64, Christie recalls being told by a British official that it was not the done thing: “He meant it was not the done thing for a Black person.”

Continue reading...Readers respond to Emma Beddington’s article about having the option of at-home smear tests

I used to share Emma Beddington’s dislike of smear tests and was further outraged to discover that here in Spain they are not performed in your local GP surgery (DIY smear tests are on their way? I’ll be first in the queue, 22 July). Instead I had to make an appointment with a gynaecologist in a hospital an hour’s drive away. It turned out that this huffed-about visit would save my life.

A smear test can’t diagnose ovarian cancer, but a good gynaecologist taking advantage of a smear appointment to perform an exam can. The doctor discovered a 13cm tumour on my right ovary. He immediately sent me for blood tests and made some referrals. Within two weeks of my smear, I found myself sitting opposite two onco-gynae surgeons – who operated on me a week later.

Continue reading...Ahead of Netanyahu’s speech to Congress, rights groups decried the secret social media campaign to prop up support for Israel.

The post Rights Groups Demand Biden Give Answers on Israel’s Secret Influence Campaign on Congress appeared first on The Intercept.

Social media platform uses pre-ticked boxes of consent, a practice that violates UK and EU GDPR rules

Elon Musk’s X platform is under pressure from data regulators after it emerged that users are consenting to their posts being used to build artificial intelligence systems via a default setting on the app.

The UK and Irish data watchdogs said they have contacted X over the apparent attempt to gain user consent for data harvesting without them knowing about it.

Continue reading...Singer, who hasn’t performed onstage since 2020 as a result of her health, brought down the house with a breathtaking take on an Edith Piaf classic

The casual sports fans of the world endured four hours of rambling, chaotic, rainy pomp and circumstance along the Seine on Friday evening for one reason: to possibly see Céline Dion return to the stage. The 56-year-old French Canadian singer has not performed in over four years, owing to a rare, incurable neurological disorder called stiff person syndrome. Despite struggling with uncontrollable muscle spasms extreme enough to break ribs, Dion, a true-blue born performer, promised to one day return. “If I can’t run, I’ll walk. If I can’t walk, I’ll crawl,” she said in her recent documentary I Am: Céline Dion. “And I won’t stop. I won’t stop.”

On a soggy Friday night in Paris, at the tail end of the Olympic opening ceremonies, Dion did more than just return – she triumphed. Bedecked in silver sparkles, accompanied by a rain-soaked piano on the steps of the Eiffel Tower, she not only sang Edith Piaf’s Hymne A L’Amour – which, truly, would have been more than enough – but performed it with the gusto of someone who, by her own admission, longs to resume touring more than her fans. If you have seen the documentary, then you know it is nearly impossible to fathom the amount of medicine and therapy, on top of bottomless grit and determination, required for Dion to retake the stage, let alone be the capstone performance at France’s Olympics, let alone do it well, with palpable, distinctive vocal power and without seeming to miss a note. She is, as pop singer Kelly Clarkson put it on the American NBC broadcast, a “vocal athlete”.

Continue reading...James Wilburn, father of Black woman shot dead by Illinois police, says vice-president ‘let us know she is with us 100%’

Vice-President Kamala Harris on Friday called the family of Sonya Massey, a 36-year-old Black woman who was fatally shot by a sheriff’s deputy in her Illinois home, according to Massey’s family members, who spoke to NBC News.

Massey was killed on 6 July after she called the Sangamon county sheriff’s office because she was afraid there might be a prowler outside, according to an attorney for her family and Illinois state police.

Continue reading...Singer, who cancelled tour dates as a result of stiff person syndrome, makes comeback with Edith Piaf rendition

Céline Dion made a triumphant return to the stage at the opening ceremony of the Paris Olympics.

The star, who has been diagnosed with the neurological disorder stiff person syndrome, sang Edith Piaf’s Hymne A L’Amour at the Eiffel Tower for a global audience of millions, her first live on-stage performance since early 2020.

Continue reading...  | submitted by /u/toaster_strudel_ [link] [comments] |

The Miami Dolphins signed quarterback Tua Tagovailoa to a four-year contract extension valued at a franchise-record $212.4m, according to media reports on Monday.

At an average of $53.1m per year, Tagovailoa will rank third in the NFL in quarterback pay behind Jacksonville’s Trevor Lawrence and Cincinnati’s Joe Burrow. The deal includes $167m guaranteed, eighth most among quarterbacks.

ESPN first reported the extension, attributing the terms to the agency that represents Tagovailoa, Athletes First. The Dolphins did not announce the extension, though the team did post a video of Tagovailoa on social media Friday afternoon.

Tagovailoa was still playing under the contract he signed when the Dolphins made him the fifth overall selection of the 2020 draft. Tagovailoa was looking for a contract similar to those signed by Burrow and Justin Herbert, who were drafted the same year. After their rookie deals, Burrow and Herbert signed multiyear contracts in excess of $200m.

Throughout negotiations, Tagovailoa participated in the team’s offseason workouts and parts of the first few days of training camp. He was a full participant on Friday.

Tagovailoa, who sustained multiple concussions his first three NFL seasons, positioned himself for a big pay bump with an injury-free and productive 2023. He threw for 29 touchdowns and a league-best 4,624 yards.

The Dolphins reached the postseason but were eliminated in the first round by eventual Super Bowl champion Kansas City, extending to 24 years Miami’s stretch without a playoff win.

The contract extension will keep Tagovailoa with Miami through 2028.

US announces arrest of two leaders of organised crime group as Mexican authorities say they were in the dark

The Mexican president, Andrés Manuel López Obrador, has called for “transparency” after the sudden and secretive arrests by US authorities of two top leaders of the Sinaloa cartel, one of Mexico’s most powerful organised crime groups.

Ismael “El Mayo” Zambada García, 76, founded the Sinaloa cartel with Joaquín “El Chapo” Guzmán Loera, and has been a top target of US law enforcement for decades, with a $15m bounty on his head.

Continue reading...Judith Monarrez says she never stopped looking for her chihuahua after he escaped from the backyard in 2015

Judith Monarrez crumpled onto her kitchen floor and wept when the news arrived in an email: Gizmo, her pet dog missing for nine years, had been found alive.

Monarrez was 28 and living with her parents in 2015 when Gizmo, then two years old, slipped past a faulty gate in the backyard of their home in Las Vegas.

Continue reading...Labour government announces its biggest step yet in overhauling the UK’s approach to the Middle East

Labour has announced its biggest step yet in overhauling the UK’s approach to the Middle East, dropping its opposition to an international arrest warrant against Benjamin Netanyahu despite pressure from Washington not to do so.

Downing Street announced on Friday that the government would not submit a challenge to the jurisdiction of the international criminal court, whose chief prosecutor, Karim Khan, is seeking a warrant against the Israeli prime minister.

Continue reading...Rachel Reeves is expected to accept pay review body recommendations in move that could cost up to £10bn

Millions of public sector workers are set for an above-inflation pay rise due to be announced by Rachel Reeves next week after more than a decade of austerity.

The chancellor is expected to accept the recommendations of public sector pay bodies for pay increases on Monday – a move economists believe could cost up to £10bn.

Continue reading...More than 120,000 heat-related ER visits were tracked in 2023, as people struggle in record-breaking temperatures

In his 40 years in the emergency room, David Sklar can think of three moments in his career when he was terrified.

“One of them was when the Aids epidemic hit, the second was Covid, and now there’s this,” the Phoenix physician said, referring to his city’s unrelenting heat. Last month was the city’s hottest June on record, with temperatures averaging 97F (36C), and scientists say Phoenix is on track to experience its hottest summer on record this year.

Continue reading...The British musician’s new album is everywhere but it took her 15 years to go from Myspace to the White House

As someone who existed outside the mainstream for much of her early career, Charli xcx has come a long way. The British pop star who was first noticed via her Myspace page is not only responsible for the meme of the summer, she has even become an influential factor in the turbulent presidential elections across the Atlantic.

“Can’t believe Charli xcx is successfully doing foreign intervention in a US election as an album marketing tactic,” one fan posted on X after Kamala Harris’s campaign fully embraced the singer’s endorsement.

Continue reading...Danielle Smith and her government’s refusal to combat global heating is said to have made blazes more intense

When Danielle Smith, premier of Alberta, began her grim update about the wildfire damage to Jasper, the famed mountain resort in the Canadian Rockies, her voice slipped and she held back tears.

Hours earlier, a fast-moving wildfire tore through the community, incinerating homes, businesses and historic buildings. She praised the “true heroism” of fire crews who had rushed in to save Jasper, only to be pulled back when confronted by a 400ft wall of flames. She spoke about the profound meaning and “magic” of the national park.

Continue reading...Strength of PM’s crackdown shows her nervousness and that climate of fear is breaking down, say critics

Hasan still has the metal pellets Bangladesh police fired at him lodged deep in his bones. Fearful he will join the growing ranks of those thrown behind bars by the state for participating in protests that have swept Bangladesh this month, Hasan has been in hiding for a week and described his state as one of “constant panic and trauma”.

“Whenever I hear the sound of a car or a motorbike, I think it might be the police coming for me,” he said.

Continue reading...  | submitted by /u/shellfishb [link] [comments] |

We’d like to hear how people are experiencing travel disruptions ahead of the Olympic Games in Paris

France’s high-speed rail network has been hit by coordinated “malicious acts” including arson attacks that have brought major disruption to many of the country’s busiest rail lines hours before the Paris Olympics opening ceremony.

Eurostar journeys are also affected, with eighteen Eurostar trains due to run between London and Paris, but an unknown number having been cancelled. Travellers from London to Paris face 90-minute delays and train cancellations on the day of the Olympic Games opening ceremony.

Continue reading...Policymakers and funders are being urged to invest in training a workforce to serve the industries of the future

A greener economy could bring millions of jobs to some of the largest countries in Africa, according to a new report.

Research by the development agency FSD Africa and the impact advisory firm Shortlist predicts that 3.3 million jobs could be generated across the continent by 2030.

Continue reading...Non-cancer electives could be delayed as early as next week as TGA issues shortage alert for multiple products used in surgery and critical care

Surgeries could be cancelled as soon as next week due to an “unprecedented” shortage of intravenous fluids in Australian hospitals, the peak doctors’ body has warned.

The medicines regulator, the Therapeutic Goods Administration (TGA), issued a shortage alert on Friday for multiple intravenous (IV) fluid products, which are used in surgery and critical care.

Sign up for a weekly email featuring our best reads

Continue reading...Sean Grayson, who is now charged with murder in the fatal shooting of Sonya Massey, was previously discharged from the U.S. Army for serious misconduct — and still hired at six police departments in Central Illinois.

The post Deputy Accused of Killing Sonya Massey Was Discharged From Army for Serious Misconduct appeared first on The Intercept.

Brazil court freezes assets of Dirceu Kruger to pay climate compensation for illegal deforestation

A Brazilian cattle rancher has been ordered to pay more than $50m (£39m) for destroying part of the Amazon rainforest and ordered to restore the precious carbon sink.

Last week, a federal court in Brazil froze the assets of Dirceu Kruger to pay compensation for the damage he had caused to the climate through illegal deforestation. The case was brought by Brazil’s attorney general’s office, representing the Brazilian institute of environment and renewable natural resources (Ibama). It is the largest civil case brought for climate crimes in Brazil to date and the start of a legal push to repair and deter damage to the rainforest.

Continue reading...Residents evacuated in Metro Manila and nearby provinces as flooding wreaks havoc

Continue reading...This is a fantastic video. It’s an Iranian spider-tailed horned viper (Pseudocerastes urarachnoides). Its tail looks like a spider, which the snake uses to fool passing birds looking for a meal.

Video:

00:18:15

Video:

00:18:15

ESA Director General Josef Aschbacher announces the first two astronaut missions for the new ESA astronaut class of 2022 on the first day of the Space Council, held in Brussels on 22 and 23 May 2024.

ESA's most recent class of astronauts selected in 2022 includes Sophie Adenot, Pablo Álvarez Fernández, Rosemary Coogan, Raphaël Liégeois, and Marco Sieber. They recently completed one year of basic training and graduated as ESA astronauts on 22 April at ESA's European Astronaut Centre in Germany, making them eligible for spaceflight. During their missions aboard the International Space Station, ESA astronauts will engage in a diverse range of activities, from conducting scientific experiments and medical research to Earth observation, outreach and operational tasks.

Israel’s occupation of Palestinian territories is illegal, a form of apartheid, and must end, says the U.N.’s high court at The Hague.

The post The ICJ Ruling Confirms What Palestinians Have Been Saying for 57 Years appeared first on The Intercept.

Image:

Image:

The Orion vehicle that will bring astronauts around the Moon and back for the first time in over 50 years was recently tested in a refurbished altitude chamber used during the Apollo era.

Engineers tested Orion in a near-vacuum environment designed to simulate the space conditions the vehicle will travel through during its mission towards the Moon. Teams emptied the altitude chamber of air, a process taking up to a day, to create a very low-pressure environment over 2000 times lower and more vacuum-like than inside your vacuum cleaner. Orion remained in the altitude chamber’s low-pressure environment for around a week, with engineering teams monitoring the spacecraft’s systems and collecting data to qualify Orion for safely flying the Artemis II crew through the harsh environment of space.

The next step for Orion will take place after the summer: the installation of its four, seven-metre long solar arrays that the European Service Module (ESM) will use to power the vehicle and its crew of four towards the Moon and back during the Artemis II mission.

Rachid Amekrane, Orion-ESM US Campaign Lead at Airbus, stands next to the Orion spacecraft inside the altitude chamber at NASA’s Kennedy Space Center in Florida. Next to his hand are four nozzles; these are some of the reaction control system engines of the ESM. In total, there are 33 engines on the ESM: 24 reaction control system engines, eight auxiliary thrusters and a Shuttle-era main engine.

Video:

00:38:42

Video:

00:38:42

ESA astronaut Alexander Gerst, an experienced spaceflyer, spacewalker, and former ISS commander, shares insights into his role as head of astronaut operations at ESA’s European Astronaut Centre. Tune in as he talks with us about guiding the next generation of astronauts through training and preparing them for their future in space exploration.

This is Episode 8 of our ESA Explores podcast series, delving into everything you want to know about the ESA astronaut class of 2022. Recorded in April 2024.

Find out more about Alexander.

Access all ESA Explores podcasts.

ESA’s Earth Return Orbiter, the first spacecraft that will rendezvous and capture an object around another planet, passed a key milestone to bring the first Mars samples back to Earth.

Video:

00:24:06

Video:

00:24:06

John McFall, a member of the European astronaut reserve from the ESA astronaut class of 2022, brings a diverse background to his role. With experience as an orthopaedic and trauma surgeon and a former Paralympic sprinter, John is participating in the groundbreaking "Fly!" feasibility study. This initiative seeks to enhance our comprehension of the challenges posed by space flight for astronauts with physical disabilities, aiming to overcome these barriers. Tune in to discover more about John and the "Fly!" project.

This is Episode 9 of our ESA Explores podcast series, delving into everything you want to know about the ESA astronaut class of 2022. Recorded in November 2023.

Find out more about John.

About the ESA astronaut class of 2022.

Hosted by Laura Zurmühlen, with audio editing and music by Denzel Lorge, and cover art by Gaël Nadaud.

Access all ESA Explores podcasts.

When hypersonic aeronautics and space exploration meet, European engineers dream of a future fast-track return-ticket to space.

At ILA Berlin, ESA and Vast signed a Memorandum of Understanding for future Vast space stations.

Lunar I-Hab, the next European habitat in lunar orbit as part of the Gateway, has recently undergone critical tests to explore and improve human living conditions inside the space module.

Video:

00:57:15

Video:

00:57:15

Watch a replay of the ESA astronaut class of 2022 graduation ceremony.

ESA astronaut candidates Sophie Adenot, Rosemary Coogan, Pablo Álvarez Fernández, Raphaël Liégeois, Marco Sieber and Australian Space Agency astronaut candidate Katherine Bennell-Pegg received astronaut certification at ESA’s European Astronaut Centre on 22 April 2024. This officially marks their transition into fully-fledged astronauts, ready and eligible for spaceflight.

The group was selected in November 2022 and began their year-long basic astronaut training in April 2023.

Basic astronaut training provides the candidates with overall familiarisation and training in various areas, such as spacecraft systems, spacewalking, flight engineering, robotics and life support systems, as well as survival and medical training.

Following certification, the new astronauts will move on to the next phases of pre-assignment and mission-specific training, paving the way for future missions to the International Space Station and beyond.

Security group Magen Am’s staff also includes a former Navy SEAL who posted a video of waterboarding his own child.

The post LA City Council Considers Paying Former IDF Soldiers to Patrol Its Streets appeared first on The Intercept.

We want to hear from people in the UK hit by the two-child limit on their experiences

The prime minister, Keir Starmer, is facing pressure to abolish the two-child limit on universal credit, with the Scottish Labour leader, Anas Sarwar, calling the cap “wrong” and urging Starmer to scrap it.

The limit on universal credit or child tax credit for more than two children (with exceptions for children with disabilities or those born before April 2017) impacts around 450,000 families, including 1.6 million children.

Continue reading...

In the rapidly advancing landscape of AI technology and innovation, LimeWire emerges as a unique platform in the realm of generative AI tools. This platform not only stands out from the multitude of existing AI tools but also brings a fresh approach to content generation. LimeWire not only empowers users to create AI content but also provides creators with creative ways to share and monetize their creations.

As we explore LimeWire, our aim is to uncover its features, benefits for creators, and the exciting possibilities it offers for AI content generation. This platform presents an opportunity for users to harness the power of AI in image creation, all while enjoying the advantages of a free and accessible service.

Let's unravel the distinctive features that set LimeWire apart in the dynamic landscape of AI-powered tools, understanding how creators can leverage its capabilities to craft unique and engaging AI-generated images.

This revamped LimeWire invites users to register and unleash their creativity by crafting original AI content, which can then be shared and showcased on the LimeWire Studio. Notably, even acclaimed artists and musicians, such as Deadmau5, Soulja Boy, and Sean Kingston, have embraced this platform to publish their content in the form of NFT music, videos, and images.

Beyond providing a space for content creation and sharing, LimeWire introduces monetization models to empower users to earn revenue from their creations. This includes avenues such as earning ad revenue and participating in the burgeoning market of Non-Fungible Tokens (NFTs). As we delve further, we'll explore these monetization strategies in more detail to provide a comprehensive understanding of LimeWire's innovative approach to content creation and distribution.

LimeWire Studio welcomes content creators into its fold, providing a space to craft personalized AI-focused content for sharing with fans and followers. Within this creative hub, every piece of content generated becomes not just a creation but a unique asset—ownable and tradable. Fans have the opportunity to subscribe to creators' pages, immersing themselves in the creative journey and gaining ownership of digital collectibles that hold tradeable value within the LimeWire community. Notably, creators earn a 2.5% royalty each time their content is traded, adding a rewarding element to the creative process.

The platform's flexibility is evident in its content publication options. Creators can choose to share their work freely with the public or opt for a premium subscription model, granting exclusive access to specialized content for subscribers.

As of the present moment, LimeWire focuses on AI Image Generation, offering a spectrum of creative possibilities to its user base. The platform, however, has ambitious plans on the horizon, aiming to broaden its offerings by introducing AI music and video generation tools in the near future. This strategic expansion promises creators even more avenues for expression and engagement with their audience, positioning LimeWire Studio as a dynamic and evolving platform within the realm of AI-powered content creation.

The LimeWire AI image generation tool presents a versatile platform for both the creation and editing of images. Supporting advanced models such as Stable Diffusion 2.1, Stable Diffusion XL, and DALL-E 2, LimeWire offers a sophisticated toolkit for users to delve into the realm of generative AI art.

Much like other tools in the generative AI landscape, LimeWire provides a range of options catering to various levels of complexity in image creation. Users can initiate the creative process with prompts as simple as a few words or opt for more intricate instructions, tailoring the output to their artistic vision.

What sets LimeWire apart is its seamless integration of different AI models and design styles. Users have the flexibility to effortlessly switch between various AI models, exploring diverse design styles such as cinematic, digital art, pixel art, anime, analog film, and more. Each style imparts a distinctive visual identity to the generated AI art, enabling users to explore a broad spectrum of creative possibilities.

The platform also offers additional features, including samplers, allowing users to fine-tune the quality and detail levels of their creations. Customization options and prompt guidance further enhance the user experience, providing a user-friendly interface for both novice and experienced creators.

Excitingly, LimeWire is actively developing its proprietary AI model, signaling ongoing innovation and enhancements to its image generation capabilities. This upcoming addition holds the promise of further expanding the creative horizons for LimeWire users, making it an evolving and dynamic platform within the landscape of AI-driven art and image creation.

Sign Up Now To Get Free Credits

Upon completing your creative endeavor on LimeWire, the platform allows you the option to publish your content. An intriguing feature follows this step: LimeWire automates the process of minting your creation as a Non-Fungible Token (NFT), utilizing either the Polygon or Algorand blockchain. This transformative step imbues your artwork with a unique digital signature, securing its authenticity and ownership in the decentralized realm.

Creators on LimeWire hold the power to decide the accessibility of their NFT creations. By opting for a public release, the content becomes discoverable by anyone, fostering a space for engagement and interaction. Furthermore, this choice opens the avenue for enthusiasts to trade the NFTs, adding a layer of community involvement to the artistic journey.

Alternatively, LimeWire acknowledges the importance of exclusivity. Creators can choose to share their posts exclusively with their premium subscribers. In doing so, the content remains a special offering solely for dedicated fans, creating an intimate and personalized experience within the LimeWire community. This flexibility in sharing options emphasizes LimeWire's commitment to empowering creators with choices in how they connect with their audience and distribute their digital creations.

After creating your content, you can choose to publish the content. It will automatically mint your creation as an NFT on the Polygon or Algorand blockchain. You can also choose whether to make it public or subscriber-only.

If you make it public, anyone can discover your content and even trade the NFTs. If you choose to share the post only with your premium subscribers, it will be exclusive only to your fans.

Additionally, you can earn ad revenue from your content creations as well.

When you publish content on LimeWire, you will receive 70% of all ad revenue from other users who view your images, music, and videos on the platform.

This revenue model will be much more beneficial to designers. You can experiment with the AI image and content generation tools and share your creations while earning a small income on the side.

The revenue you earn from your creations will come in the form of LMWR tokens, LimeWire’s own cryptocurrency.

Your earnings will be paid every month in LMWR, which you can then trade on many popular crypto exchange platforms like Kraken, ByBit, and UniSwap.

You can also use your LMWR tokens to pay for prompts when using LimeWire generative AI tools.

You can sign up to LimeWire to use its AI tools for free. You will receive 10 credits to use and generate up to 20 AI images per day. You will also receive 50% of the ad revenue share. However, you will get more benefits with premium plans.

For $9.99 per month, you will get 1,000 credits per month, up to 2 ,000 image generations, early access to new AI models, and 50% ad revenue share

For $29 per month, you will get 3750 credits per month, up to 7500 image generations, early access to new AI models, and 60% ad revenue share

For $49 per month, you will get 5,000 credits per month, up to 10,000 image generations, early access to new AI models, and 70% ad revenue share

For $99 per month, you will get 11,250 credits per month, up to 2 2,500 image generations, early access to new AI models, and 70% ad revenue share

With all premium plans, you will receive a Pro profile badge, full creation history, faster image generation, and no ads.

Sign Up Now To Get Free Credits

In conclusion, LimeWire emerges as a democratizing force in the creative landscape, providing an inclusive platform where anyone can unleash their artistic potential and effortlessly share their work. With the integration of AI, LimeWire eliminates traditional barriers, empowering designers, musicians, and artists to publish their creations and earn revenue with just a few clicks.

The ongoing commitment of LimeWire to innovation is evident in its plans to enhance generative AI tools with new features and models. The upcoming expansion to include music and video generation tools holds the promise of unlocking even more possibilities for creators. It sparks anticipation about the diverse and innovative ways in which artists will leverage these tools to produce and publish their own unique creations.

For those eager to explore, LimeWire's AI tools are readily accessible for free, providing an opportunity to experiment and delve into the world of generative art. As LimeWire continues to evolve, creators are encouraged to stay tuned for the launch of its forthcoming AI music and video generation tools, promising a future brimming with creative potential and endless artistic exploration

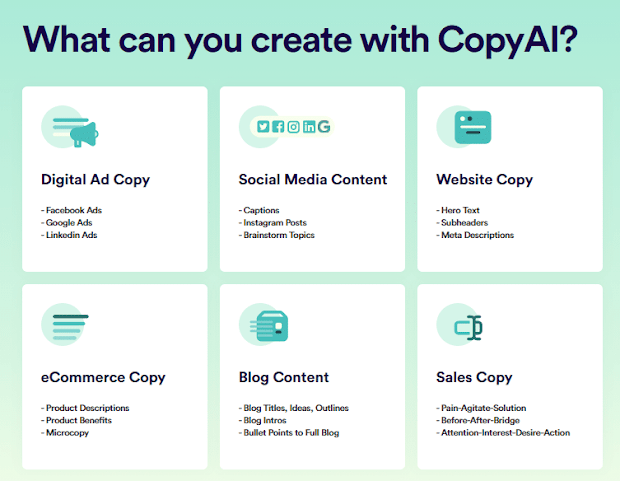

In this article, we explore the top 10 AI tools that are driving innovation and efficiency in various industries. These tools are designed to automate repetitive tasks, improve workflow, and increase productivity. The tools included in our list are some of the most advanced and widely used in the market, and are suitable for a variety of applications. Some of the tools focus on natural language processing, such as ChatGPT and Grammarly, while others focus on image and video generation, such as DALL-E and Lumen5. Other tools such as OpenAI Codex, Tabnine, Canva, Jasper AI,, and Surfer SEO are designed to help with specific tasks such as code understanding content writing and website optimization. This list is a great starting point for anyone looking to explore the possibilities of AI and how it can be applied to their business or project.

So let’s dive into

ChatGPT is a large language model that generates human-like

responses to a variety of prompts. It can be used for tasks such as language

translation, question answering, and text completion. It can

handle a wide range of topics and styles of writing, and generates coherent and

fluent text, but should be used with care as it may generate text that is

biased, offensive, or factually incorrect.

Pros:

Cons:

Overall, ChatGPT is a powerful tool for natural language

processing, but it should be used with care and with an understanding of its

limitations.

DALL-E is a generative model developed by OpenAI that is

capable of generating images from text prompts. It is based on the GPT-3 architecture,

which is a transformer-based neural network language model that has been

trained on a massive dataset of text. DALL-E can generate images that

are similar to a training dataset and it can generate high-resolution

images that are suitable for commercial use.

Pros:

Cons:

Overall, DALL-E is a powerful AI-based tool for generating

images, it can be used for a variety of applications such as creating images

for commercial use, gaming, and other creative projects. It is important to

note that the generated images should be reviewed and used with care, as they

may not be entirely original and could be influenced by the training data.

Lumen5 is a content creation platform that uses AI to help

users create videos, social media posts, and other types of content. It has

several features that make it useful for content creation and marketing,

including:

Pros:

Cons:

Overall, Lumen5 is a useful tool for creating content

quickly and easily, it can help automate the process of creating videos, social

media posts, and other types of content. However, the quality of the generated

content may vary depending on the source material and it is important to review

and edit the content before publishing it.

Grammarly is a writing-enhancement platform that uses AI to

check for grammar, punctuation, and spelling errors in the text. It also provides

suggestions for improving the clarity, concision, and readability of the text. It

has several features that make it useful for improving writing, including:

Pros:

Cons:

OpenAI Codex is a system developed by OpenAI that can

create code from natural language descriptions of software tasks. The system is

based on the GPT-3 model and can generate code in multiple programming

languages.

Pros:

Cons:

Overall, OpenAI Codex is a powerful tool that can help

automate the process of writing code and make it more accessible to

non-technical people. However, the quality of the generated code may vary

depending on the task description and it is important to review and test the

code before using it in a production environment. It is important to use the

tool as an aid, not a replacement for the developer's knowledge.

Tabnine is a code completion tool that uses AI to predict

and suggest code snippets. It is compatible with multiple programming languages

and can be integrated with various code editors.

Pros:

Cons:

Overall, TabNine is a useful tool for developers that can

help improve coding efficiency and reduce the time spent on writing code.

However, it is important to review the suggestions provided by the tool and use

them with caution, as they may not always be accurate or appropriate. It is

important to use the tool as an aid, not a replacement for the developer's

knowledge.

Jasper is a content writing and content generation tool that uses artificial intelligence to identify the best words and sentences for your writing style and medium in the most efficient, quick, and accessible way.

Pros:

Cons:

Surfer SEO is a software tool designed to help website

owners and digital marketers improve their search engine optimization (SEO)

efforts. The tool provides a variety of features that can be used to analyze a

website's on-page SEO, including:

Features:

Pros:

Cons:

Overall, Surfer SEO can be a useful tool for website owners

and digital marketers looking to improve their SEO efforts. However, it is

important to remember that it is just a tool and should be used in conjunction

with other SEO best practices. Additionally, the tool is not a guarantee of

better ranking.

Zapier is a web automation tool that allows users to

automate repetitive tasks by connecting different web applications together. It

does this by creating "Zaps" that automatically move data between

apps, and can also be used to trigger certain actions in one app based on

events in another app.

Features:

Pros:

Cons:

Overall, Zapier is a useful tool that can help users

automate repetitive tasks and improve workflow. It can save time and increase

productivity by connecting different web applications together. However, it may

require some technical skills and some features may require a paid

subscription. It is important to use the tool with caution and not to rely too

much on it, to understand the apps better.

Compose AI is a company that specializes in developing

natural language generation (NLG) software. Their software uses AI to

automatically generate written or spoken text from structured data, such as

spreadsheets, databases, or APIs.

Features:

Pros:

Cons:

Overall, Compose AI's NLG software can be a useful tool for

automating the process of creating written or spoken content from structured

data. However, the quality of the generated content may vary depending on the

data source, and it is essential to review the generated content before using

it in a production environment. It is important to use the tool as an aid, not

a replacement for the understanding of the data.

AI tools are becoming increasingly important in today's

business and technology landscape. They are designed to automate repetitive

tasks, improve workflow, and increase productivity. The top 10 AI tools

included in this article are some of the most advanced and widely used in the

market, and are suitable for various applications. Whether you're looking

to improve your natural language processing, create high-resolution images, or

optimize your website, there is an AI tool that can help. It's important to

research and evaluate the different tools available to determine which one is

the best fit for your specific needs. As AI technology continues to evolve,

these tools will become even more powerful and versatile and will play an even

greater role in shaping the future of business and technology.

Are you looking for a way to create content that is both effective and efficient? If so, then you should consider using an AI content generator. AI content generators are a great way to create content that is both engaging and relevant to your audience.

There are a number of different AI content generator tools available on the market, and it can be difficult to know which one is right for you. To help you make the best decision, we have compiled a list of the top 10 AI content generator tools that you should use in 2022.

So, without further ado, let’s get started!

Boss Mode: $99/Month

The utility of this service can be used for short-term or format business purposes such as product descriptions, website copy, market copy, and sales reports.

Free Trial – 7 days with 24/7 email support and 100 runs per day.

Pro Plan: $49 and yearly, it will cost you $420 i.e. $35 per month.

Wait! I've got a pretty sweet deal for you. Sign up through the link below, and you'll get (7,000 Free Words Plus 40% OFF) if you upgrade to the paid plan within four days.

Claim Your 7,000 Free Words With This Special Link - No Credit Card Required

Just like Outranking, Frase is an AI that helps you research, create and optimize your content to make it high quality within seconds. Frase works on SEO optimization where the content is made to the liking of search engines by optimizing keywords and keywords.

Solo Plan: $14.99/Month and $12/Month if billed yearly with 4 Document Credits for 1 user seat.

Basic Plan: $44.99/month and $39.99/month if billed yearly with 30 Document Credits for 1 user seat.

Team Plan: $114.99/month and $99.99/month if billed yearly for unlimited document credits for 3 users.

*SEO Add-ons and other premium features for $35/month irrespective of the plan.

Article Forge is another content generator that operates quite differently from the others on this list. Unlike Jasper.ai, which requires you to provide a brief and some information on what you want it to write this tool only asks for a keyword. From there, it’ll generate a complete article for you.

What’s excellent about Article Forge is they provide a 30-day money-back guarantee. You can choose between a monthly or yearly subscription. Unfortunately, they offer a free trial and no free plan:

Basic Plan: $27/Month

This plan allows users to produce up to 25k words each month. This is excellent for smaller blogs or those who are just starting.

Standard Plan: $57/month)

Unlimited Plan: $117/month

It’s important to note that Article Forge guarantees that all content generated through the platform passes Copyscape.

Rytr.me is a free AI content generator perfect for small businesses, bloggers, and students. The software is easy to use and can generate SEO-friendly blog posts, articles, and school papers in minutes.

Cons

Pricing

Rytr offers a free plan that comes with limited features. It covers up to 5,000 characters generated each month and has access to the built-in plagiarism checker. If you want to use all the features of the software, you can purchase one of the following plans:

Saver Plan: $9/month, $90/year

Writesonic is a free, easy-to-use AI content generator. The software is designed to help you create copy for marketing content, websites, and blogs. It's also helpful for small businesses or solopreneurs who need to produce content on a budget.

Writesonic is free with limited features. The free plan is more like a free trial, providing ten credits. After that, you’d need to upgrade to a paid plan. Here are your options:

Short-form: $15/month

Features:

Long-Form: $19/month

CopySmith is an AI content generator that can be used to create personal and professional documents, blogs, and presentations. It offers a wide range of features including the ability to easily create documents and presentations.

CopySmith also has several templates that you can use to get started quickly.

CopySmith offers a free trial with no credit card required. After the free trial, the paid plans are as follows:

Starter Plan: $19/month

Hypotenuse.ai is a free online tool that can help you create AI content. It's great for beginners because it allows you to create videos, articles, and infographics with ease. The software has a simple and easy-to-use interface that makes it perfect for new people looking for AI content generation.

Special Features

Hypotenuse doesn’t offer a free plan. Instead, it offers a free trial period where you can take the software for a run before deciding whether it’s the right choice for you or not. Other than that, here are its paid options:

Starter Plan: $29/month

Growth Plan: $59/month

Enterprise – pricing is custom, so don’t hesitate to contact the company for more information.

Kafkai comes with a free trial to help you understand whether it’s the right choice for you or not. Additionally, you can also take a look at its paid plans:

Writer Plan: $29/month Create 100 articles per month. $0.29/article

Newsroom Plan $49/month – Generate 250 articles a month at $0.20 per article.

Printing Press Plan: $129 /month Create up to 1000 articles a month at roughly $0.13/article.

Industrial Printer Plan: ($199 a month) – Generate 2500 articles each month for $0.08/article.

Peppertype.ai is an online AI content generator that’s easy to use and best for small business owners looking for a powerful copy and content writing tool to help them craft and generate various content for many purposes.

Unfortunately, Peppertype.ai isn’t free. However, it does have a free trial to try out the software before deciding whether it’s the right choice for you. Here are its paid plans:

personal Plan:$35/Month

Team Plan: $199/month

Enterprise – pricing is custom, so please contact the company for more information.

It is no longer a secret that humans are getting overwhelmed with the daily task of creating content. Our lives are busy, and the process of writing blog posts, video scripts, or other types of content is not our day job. In comparison, AI writers are not only cheaper to hire, but also perform tasks at a high level of excellence. This article explores 10 writing tools that used AI to create better content choose the one which meets your requirements and budget but in my opinion Jasper ai is one of the best tools to use to make high-quality content.

If you have any questions ask in the comments section

Note: Don't post links in your comments

Note: This article contains affiliate links which means we make a small commission if you buy any premium plan from our link.

|

| Image by vectorjuice on Freepik |

.webp) |

The marketing industry is turning to artificial intelligence (AI) as a way to save time and execute smarter, more personalized campaigns. 61% of marketers say AI software is the most important aspect of their data strategy.

If you’re late to the AI party, don’t worry. It’s easier than you think to start leveraging artificial intelligence tools in your marketing strategy. Here are 11 AI marketing tools every marketer should start using today.

Personalize is an AI-powered technology that helps you identify and produce highly targeted sales and marketing campaigns by tracking the products and services your contacts are most interested in at any given time. The platform uses an algorithm to identify each contact’s top three interests, which are updated in real-time based on recent site activity.

Key Features

Seventh Sense provides behavioral analytics that helps you win attention in your customers’ overcrowded email inboxes. Choosing the best day and time to send an email is always a gamble. And while some days of the week generally get higher open rates than others, you’ll never be able to nail down a time that’s best for every customer. Seventh Sense eases your stress of having to figure out the perfect send-time and day for your email campaigns. The AI-based platform figures out the best timing and email frequency for each contact based on when they’re opening emails. The tool is primarily geared toward HubSpot and Marketo customers

Key Features

Phrasee uses artificial intelligence to help you write more effective subject lines. With its AI-based Natural Language Generation system, Phrasee uses data-driven insights to generate millions of natural-sounding copy variants that match your brand voice. The model is end-to-end, meaning when you feed the results back to Phrasee, the prediction model rebuilds so it can continuously learn from your audience.

Key Features

HubSpot Search Engine Optimization (SEO) is an integral tool for the Human Content team. It uses machine learning to determine how search engines understand and categorize your content. HubSpot SEO helps you improve your search engine rankings and outrank your competitors. Search engines reward websites that organize their content around core subjects, or topic clusters. HubSpot SEO helps you discover and rank for the topics that matter to your business and customers.

Key Features

When you’re limited to testing two variables against each other at a time, it can take months to get the results you’re looking for. Evolv AI lets you test all your ideas at once. It uses advanced algorithms to identify the top-performing concepts, combine them with each other, and repeat the process to achieve the best site experience.

Key Features

Acrolinx is a content alignment platform that helps brands scale and improves the quality of their content. It’s geared toward enterprises – its major customers include big brands like Google, Adobe, and Amazon - to help them scale their writing efforts. Instead of spending time chasing down and fixing typos in multiple places throughout an article or blog post, you can use Acrolinx to do it all right there in one place. You start by setting your preferences for style, grammar, tone of voice, and company-specific word usage. Then, Acrolinx checks and scores your existing content to find what’s working and suggest areas for improvement. The platform provides real-time guidance and suggestions to make writing better and strengthen weak pages.

Key features

MarketMuse uses an algorithm to help marketers build content strategies. The tool shows you where to target keywords to rank in specific topic categories, and recommends keywords you should go after if you want to own particular topics. It also identifies gaps and opportunities for new content and prioritizes them by their probable impact on your rankings. The algorithm compares your content with thousands of articles related to the same topic to uncover what’s missing from your site.

Key features:

Copilot is a suite of tools that help eCommerce businesses maintain real-time communication with customers around the clock at every stage of the funnel. Promote products, recover shopping carts and send updates or reminders directly through Messenger.

Key features:

Yotpo’s deep learning technology evaluates your customers’ product reviews to help you make better business decisions. It identifies key topics that customers mention related to your products—and their feelings toward them. The AI engine extracts relevant reviews from past buyers and presents them in smart displays to convert new shoppers. Yotpo also saves you time moderating reviews. The AI-powered moderation tool automatically assigns a score to each review and flags reviews with negative sentiment so you can focus on quality control instead of manually reviewing every post.

Key features:

Albert is a self-learning software that automates the creation of marketing campaigns for your brand. It analyzes vast amounts of data to run optimized campaigns autonomously, allowing you to feed in your own creative content and target markets, and then use data from its database to determine key characteristics of a serious buyer. Albert identifies potential customers that match those traits, and runs trial campaigns on a small group of customers—with results refined by Albert himself—before launching it on a larger scale.

Albert plugs into your existing marketing technology stack, so you still have access to your accounts, ads, search, social media, and more. Albert maps tracking and attribution to your source of truth so you can determine which channels are driving your business.

Key features:

There are many tools and companies out there that offer AI tools, but this is a small list of resources that we have found to be helpful. If you have any other suggestions, feel free to share them in the comments below this article. As marketing evolves at such a rapid pace, new marketing strategies will be invented that we haven't even dreamed of yet. But for now, this list should give you a good starting point on your way to implementing AI into your marketing mix.

Note: This article contains affiliate links, meaning we make a small commission if you buy any premium plan from our link.

There are lots of questions floating around about how affiliate marketing works, what to do and what not to do when it comes to setting up a business. With so much uncertainty surrounding both personal and business aspects of affiliate marketing. In this post, we will answer the most frequently asked question about affiliate marketing

Affiliate marketing is a way to make money by promoting the products and services of other people and companies. You don't need to create your product or service, just promote existing ones. That's why it's so easy to get started with affiliate marketing. You can even get started with no budget at all!

An affiliate program is a package of information you create for your product, which is then made available to potential publishers. The program will typically include details about the product and its retail value, commission levels, and promotional materials. Many affiliate programs are managed via an affiliate network like ShareASale, which acts as a platform to connect publishers and advertisers, but it is also possible to offer your program directly.

Affiliate networks connect publishers to advertisers. Affiliate networks make money by charging fees to the merchants who advertise with them; these merchants are known as advertisers. The percentage of each sale that the advertiser pays is negotiated between the merchant and the affiliate network.

Dropshipping is a method of selling that allows you to run an online store without having to stock products. You advertise the products as if you owned them, but when someone makes an order, you create a duplicate order with the distributor at a reduced price. The distributor takes care of the post and packaging on your behalf. As affiliate marketing is based on referrals and this type of drop shipping requires no investment in inventory when a customer buys through the affiliate link, no money exchanges hands.

Performance marketing is a method of marketing that pays for performance, like when a sale is made or an ad is clicked This can include methods like PPC (pay-per-click) or display advertising. Affiliate marketing is one form of performance marketing where commissions are paid out to affiliates on a performance basis when they click on their affiliate link and make a purchase or action.

Smartphones are essentially miniature computers, so publishers can display the same websites and offers that are available on a PC. But mobiles also offer specific tools not available on computers, and these can be used to good effect for publishers. Publishers can optimize their ads for mobile users by making them easy to access by this audience. Publishers can also make good use of text and instant messaging to promote their offers. As the mobile market is predicted to make up 80% of traffic in the future, publishers who do not promote on mobile devices are missing out on a big opportunity.

The best way to find affiliate publishers is on reputable networks like ShareASale Cj(Commission Junction), Awin, and Impact radius. These networks have a strict application process and compliance checks, which means that all affiliates are trustworthy.

An affiliate disclosure statement discloses to the reader that there may be affiliate links on a website, for which a commission may be paid to the publisher if visitors follow these links and make purchases.

Publishers promote their programs through a variety of means, including blogs, websites, email marketing, and pay-per-click ads. Social media has a huge interactive audience, making this platform a good source of potential traffic.

A super affiliate is an affiliate partner who consistently drives a large majority of sales from any program they promote, compared to other affiliate partners involved in that program. Affiliates make a lot of money from affiliate marketing Pat Flynn earned more than $50000 in 2013 from affiliate marketing.

Publishers can be identified by their publisher ID, which is used in tracking cookies to determine which publishers generate sales. The activity is then viewed within a network's dashboard.

Because the Internet is so widespread, affiliate programs can be promoted in any country. Affiliate strategies that are set internationally need to be tailored to the language of the targeted country.

Affiliate marketing can help you grow your business in the following ways:

One of the best ways to work with qualified affiliates is to hire an affiliate marketing agency that works with all the networks. Affiliates are carefully selected and go through a rigorous application process to be included in the network.

Affiliate marketing is generally associated with websites, but there are other ways to promote your affiliate links, including:

To build your affiliate marketing business, you don't have to invest money in the beginning. You can sign up for free with any affiliate network and start promoting their brands right away.

Commission rates are typically based on a percentage of the total sale and in some cases can also be a flat fee for each transaction. The rates are set by the merchant.

Who manages your affiliate program?

Some merchants run their affiliate programs internally, while others choose to contract out management to a network or an external agency.

Cookies are small pieces of data that work with web browsers to store information such as user preferences, login or registration data, and shopping cart contents. When someone clicks on your affiliate link, a cookie is placed on the user's computer or mobile device. That cookie is used to remember the link or ad that the visitor clicked on. Even if the user leaves your site and comes back a week later to make a purchase, you will still get credit for the sale and receive a commission it depends on the site cookies duration

The merchant determines the duration of a cookie, also known as its “cookie life.” The most common length for an affiliate program is 30 days. If someone clicks on your affiliate link, you’ll be paid a commission if they purchase within 30 days of the click.

|

Imagine a world in which you can do transactions and many other things without having to give your personal information. A world in which you don’t need to rely on banks or governments anymore. Sounds amazing, right? That’s exactly what blockchain technology allows us to do.

It’s like your computer’s hard drive. blockchain is a technology that lets you store data in digital blocks, which are connected together like links in a chain.

Blockchain technology was originally invented in 1991 by two mathematicians, Stuart Haber and W. Scot Stornetta. They first proposed the system to ensure that timestamps could not be tampered with.

A few years later, in 1998, software developer Nick Szabo proposed using a similar kind of technology to secure a digital payments system he called “Bit Gold.” However, this innovation was not adopted until Satoshi Nakamoto claimed to have invented the first Blockchain and Bitcoin.

A blockchain is a distributed database shared between the nodes of a computer network. It saves information in digital format. Many people first heard of blockchain technology when they started to look up information about bitcoin.

Blockchain is used in cryptocurrency systems to ensure secure, decentralized records of transactions.

Blockchain allowed people to guarantee the fidelity and security of a record of data without the need for a third party to ensure accuracy.

To understand how a blockchain works, Consider these basic steps:

Let’s get to know more about the blockchain.

Blockchain records digital information and distributes it across the network without changing it. The information is distributed among many users and stored in an immutable, permanent ledger that can't be changed or destroyed. That's why blockchain is also called "Distributed Ledger Technology" or DLT.

Here’s how it works:

And that’s the beauty of it! The process may seem complicated, but it’s done in minutes with modern technology. And because technology is advancing rapidly, I expect things to move even more quickly than ever.

Even though blockchain is integral to cryptocurrency, it has other applications. For example, blockchain can be used for storing reliable data about transactions. Many people confuse blockchain with cryptocurrencies like bitcoin and ethereum.

Blockchain already being adopted by some big-name companies, such as Walmart, AIG, Siemens, Pfizer, and Unilever. For example, IBM's Food Trust uses blockchain to track food's journey before reaching its final destination.

Although some of you may consider this practice excessive, food suppliers and manufacturers adhere to the policy of tracing their products because bacteria such as E. coli and Salmonella have been found in packaged foods. In addition, there have been isolated cases where dangerous allergens such as peanuts have accidentally been introduced into certain products.

Tracing and identifying the sources of an outbreak is a challenging task that can take months or years. Thanks to the Blockchain, however, companies now know exactly where their food has been—so they can trace its location and prevent future outbreaks.

Blockchain technology allows systems to react much faster in the event of a hazard. It also has many other uses in the modern world.

Blockchain technology is safe, even if it’s public. People can access the technology using an internet connection.

Have you ever been in a situation where you had all your data stored at one place and that one secure place got compromised? Wouldn't it be great if there was a way to prevent your data from leaking out even when the security of your storage systems is compromised?

Blockchain technology provides a way of avoiding this situation by using multiple computers at different locations to store information about transactions. If one computer experiences problems with a transaction, it will not affect the other nodes.

Instead, other nodes will use the correct information to cross-reference your incorrect node. This is called “Decentralization,” meaning all the information is stored in multiple places.

Blockchain guarantees your data's authenticity—not just its accuracy, but also its irreversibility. It can also be used to store data that are difficult to register, like legal contracts, state identifications, or a company's product inventory.

Blockchain has many advantages and disadvantages.

I’ll answer the most frequently asked questions about blockchain in this section.

Blockchain is not a cryptocurrency but a technology that makes cryptocurrencies possible. It's a digital ledger that records every transaction seamlessly.

Yes, blockchain can be theoretically hacked, but it is a complicated task to be achieved. A network of users constantly reviews it, which makes hacking the blockchain difficult.

Coinbase Global is currently the biggest blockchain company in the world. The company runs a commendable infrastructure, services, and technology for the digital currency economy.

Blockchain is a decentralized technology. It’s a chain of distributed ledgers connected with nodes. Each node can be any electronic device. Thus, one owns blockhain.

Bitcoin is a cryptocurrency, which is powered by Blockchain technology while Blockchain is a distributed ledger of cryptocurrency

Generally a database is a collection of data which can be stored and organized using a database management system. The people who have access to the database can view or edit the information stored there. The client-server network architecture is used to implement databases. whereas a blockchain is a growing list of records, called blocks, stored in a distributed system. Each block contains a cryptographic hash of the previous block, timestamp and transaction information. Modification of data is not allowed due to the design of the blockchain. The technology allows decentralized control and eliminates risks of data modification by other parties.

Blockchain has a wide spectrum of applications and, over the next 5-10 years, we will likely see it being integrated into all sorts of industries. From finance to healthcare, blockchain could revolutionize the way we store and share data. Although there is some hesitation to adopt blockchain systems right now, that won't be the case in 2022-2023 (and even less so in 2026). Once people become more comfortable with the technology and understand how it can work for them, owners, CEOs and entrepreneurs alike will be quick to leverage blockchain technology for their own gain. Hope you like this article if you have any question let me know in the comments section

FOLLOW US ON TWITTER

Grammarly is a tool that checks for grammatical errors, spelling, and punctuation.it gives you comprehensive feedback on your writing. You can use this tool to proofread and edit articles, blog posts, emails, etc.

Grammarly also detects all types of mistakes, including sentence structure issues and misused words. It also gives you suggestions on style changes, punctuation, spelling, and grammar all are in real-time. The free version covers the basics like identifying grammar and spelling mistakes

whereas the Premium version offers a lot more functionality, it detects plagiarism in your content, suggests word choice, or adds fluency to it.

ProWritingAid is a style and grammar checker for content creators and writers. It helps to optimize word choice, punctuation errors, and common grammar mistakes, providing detailed reports to help you improve your writing.

ProWritingAid can be used as an add-on to WordPress, Gmail, and Google Docs. The software also offers helpful articles, videos, quizzes, and explanations to help improve your writing.

Here are some key features of ProWriting Aid:

Grammarly and ProWritingAid are well-known grammar-checking software. However, if you're like most people who can't decide which to use, here are some different points that may be helpful in your decision.

As both writing assistants are great in their own way, you need to choose the one that suits you best.

Both ProWritingAid and Grammarly are awesome writing tools, without a doubt. but as per my experience, Grammarly is a winner here because Grammarly helps you to review and edit your content. Grammarly highlights all the mistakes in your writing within seconds of copying and pasting the content into Grammarly’s editor or using the software’s native feature in other text editors.

Not only does it identify tiny grammatical and spelling errors, it tells you when you overlook punctuations where they are needed. And, beyond its plagiarism-checking capabilities, Grammarly helps you proofread your content. Even better, the software offers a free plan that gives you access to some of its features.

Are you searching for an ecomerce platform to help you build an online store and sell products?

In this Sellfy review, we'll talk about how this eCommerce platform can let you sell digital products while keeping full control of your marketing.

And the best part? Starting your business can be done in just five minutes.

Let us then talk about the Sellfy platform and all the benefits it can bring to your business.

Sellfy is an eCommerce solution that allows digital content creators, including writers, illustrators, designers, musicians, and filmmakers, to sell their products online. Sellfy provides a customizable storefront where users can display their digital products and embed "Buy Now" buttons on their website or blog. Sellfy product pages enable users to showcase their products from different angles with multiple images and previews from Soundcloud, Vimeo, and YouTube. Files of up to 2GB can be uploaded to Sellfy, and the company offers unlimited bandwidth and secure file storage. Users can also embed their entire store or individual project widgets in their site, with the ability to preview how widgets will appear before they are displayed.

Sellfy includes:

Sellfy is a powerful e-commerce platform that helps you personalize your online storefront. You can add your logo, change colors, revise navigation, and edit the layout of your store. Sellfy also allows you to create a full shopping cart so customers can purchase multiple items. And Sellfy gives you the ability to set your language or let customers see a translated version of your store based on their location.

Sellfy gives you the option to host your store directly on its platform, add a custom domain to your store, and use it as an embedded storefront on your website. Sellfy also optimizes its store offerings for mobile devices, allowing for a seamless checkout experience.

Sellfy allows creators to host all their products and sell all of their digital products on one platform. Sellfy also does not place storage limits on your store but recommends that files be no larger than 5GB. Creators can sell both standard and subscription-based products in any file format that is supported by the online marketplace. Customers can purchase products instantly after making a purchase – there is no waiting period.

You can organize your store by creating your product categories, sorting by any characteristic you choose. Your title, description, and the image will be included on each product page. In this way, customers can immediately evaluate all of your products. You can offer different pricing options for all of your products, including "pay what you want," in which the price is entirely up to the customer. This option allows you to give customers control over the cost of individual items (without a minimum price) or to set pricing minimums—a good option if you're in a competitive market or when you have higher-end products. You can also offer set prices per product as well as free products to help build your store's popularity.

Sellfy is ideal for selling digital content, such as ebooks. But it does not allow you to copyrighted material (that you don't have rights to distribute).

Sellfy offers several ways to share your store, enabling you to promote your business on different platforms. Sellfy lets you integrate it with your existing website using "buy now" buttons, embed your entire storefront, or embed certain products so you can reach more people. Sellfy also enables you to connect with your Facebook page and YouTube channel, maximizing your visibility.

Sellfy is a simple online platform that allows customers to buy your products directly through your store. Sellfy has two payment processing options: PayPal and Stripe. You will receive instant payments with both of these processors, and your customer data is protected by Sellfy's secure (PCI-compliant) payment security measures. In addition to payment security, Sellfy provides anti-fraud tools to help protect your products including PDF stamping, unique download links, and limited download attempts.

The Sellfy platform includes marketing and analytics tools to help you manage your online store. You can send email product updates and collect newsletter subscribers through the platform. With Sellfy, you can also offer discount codes and product upsells, as well as create and track Facebook and Twitter ads for your store. The software's analytics dashboard will help you track your best-performing products, generated revenue, traffic channels, top locations, and overall store performance.

To expand functionality and make your e-commerce store run more efficiently, Sellfy offers several integrations. Google Analytics and Webhooks, as well as integrations with Patreon and Facebook Live Chat, are just a few of the options available. Sellfy allows you to connect to Zapier, which gives you access to hundreds of third-party apps, including tools like Mailchimp, Trello, Salesforce, and more.

The free plan comes with:

Starter plan comes with: